After a hiatus of a year I held an on-line meeting for East Midland mappers back in March, with another in April. March was an important anniversary as we held our first meeting in March 2011, so it was the group's 10th anniversary. This is a little bit about recent meetings, but mainly a chance to share what works for us in the hope it may help others contemplating trying to get a local group together.

2021 Meetings

Somewhat daringly we agreed to meet in person in May, albeit with a number of changes designed to mitigate risk from covid: a quiet location, an afternoon meeting time, location reachable quickly by public transport by participants, possibility of being outdoors. Fortunately the venue we use in Derby, The Brunswick, meets all these criteria.

I was a little shocked on viewing OSMCal that we were in the vanguard of OSM local meetings returning to in-person gatherings. My appetite for risk is perhaps higher than I thought, given that I have spend much of the past 15 months shielding as a CEV (clinically-extremely vulnerable) person. Even by May I had only made 1 shopping trip into the city centre, and had not been on public transport or visited a supermarket since March 2020. This was also my first meeting with friends rather than family or neighbours.

It all felt remarkably normal. The pub was quiet, service discrete and speedy. Despite my initial misgivings I felt comfortable indoors (it was pretty cold at the end of May & I was wearing winter-levels of clothing). I think it helps that all of us have been vaccinated, most with 2 doses, and, as discussed below, as a group most of us have a low number of day-to-day contacts. In fact I felt able to take my father there a week later for his birthday.

I can't remember exactly which subject we touched on in May, but topics we have talked about this year:

- Locked gates. This was a particular desire of John Stanworth who has been mapping them fairly systematically around Sheffield. SomeoneElse now shows them on his hiking map style for the UK & Ireland (see changelog entry for March 29).

- Static railway carriages mapped as building=railway_carriage

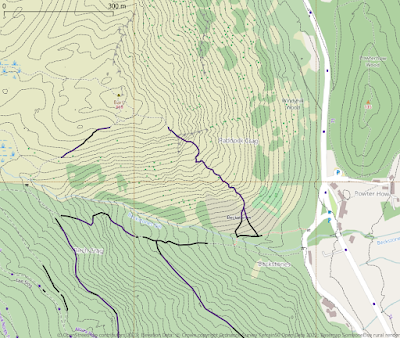

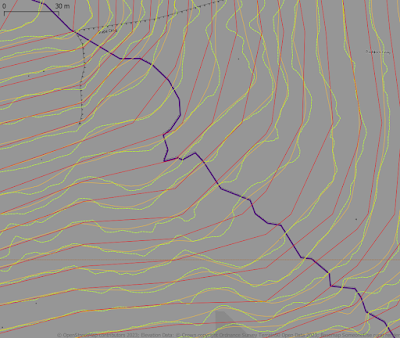

- Trackless walking & hiking routes. These are very common in Scotland where very well known ways up mountains have very few traces on the ground, and even when they exist they are often hard to tell from paths made by deer or sheep. Although walkers will not follow exactly the same route, they are relatively easy to verify from experience and knowledge. Often a path does appear high-up the hill as route options coalesce. This is an example from my own surveying in North Wales. Similar issues occur for backcountry skiing, alpine and ski mountaineering routes and bushwhacking in the Adirondacks. I've never found a tagging combination which meets this need, other than using a highway tag seems wrong, and potentially dangerous. (An side aspect are parts of regular trails which are for practical purposes invisible, and therefore should probably not be mapped as highway=path or highway=footway).

- Simple 3D buildings and F4map. Paul the Archivist has explained his approach in a diary entry.

- Gate types. I learnt something reading the wiki, in that wicket gates originally referred to small gates in larger doors or gates. Despite having passed through one many times a day for 3 years I never heard it referred to by that term, and in general my image is much more related to a gate which looks like part of a wicket fence (presumably influenced by cricket wickets). There are numerous tags which are duplicated across barrier and gate[_:]type: bump gate, kissing gate, lych gate etc. From a walker's perspective often the most important thing is whether the gate is meant for pedestrians or vehicles.

- Electric Vehicle chargers. Rovastar gave a detailed breakdown of the complexity of mapping electric vehicle chargers, and how it will also be problematic to show the data once they become ubiquitous. I'm hoping he might write this up as a diary entry: he's thought about the issues in depth.

Whether we meet next month really depends on the state of the Covid pandemic. Current doubling times make me pessimistic.

East Midlands Local Group

After 10 years it's worth taking stock of where we are, mainly because there may be things of value for other local group organisers. Some of these notes were written originally for Kyle Pullicino, who asked for information on the talk mailing list, although they were always written with this purpose in mind.

I co-organised the original Nottingham OSM get-together nearly 10 years

ago, and I have more-or-less organised, or, perhaps better, facilitated,

these activities ever since.

This is a long post as I'm taking

the opportunity to provide some detailed thoughts on these 10 years.

First, I describe our group, what we do & things I've thought of

doing. Second, I try & make some concrete recommendations.

We meet regularly (or did until Covid-19) once a month. We get anywhere from 3-8 people on a regular basis with a regular core of 5-6. We have a number of very active mappers (at one stage 3-4 in top 100 worldwide), and people active in the wider OSM community (OSM-Carto, weeklyOSM, DWG, SotM volunteers, etc.). A couple of members run or are major contributors to OSM-related websites or resources (OSM-Nottingham, Evesham Mapped and switch2osm.org). We have a steady, but small, number of people who pop-in to ask specific questions or just to meet people, these might be mappers, developers or academics.

Location

Our meetings are held in a pub, partly because OSM in the UK features a lot of folk who like pubs, but also for mundane practical reasons. People who work can't meet in the day time, relatively few coffee places are open later in the evening, it's free (except for drinks & in a larger group one can join without having to buy a drink & most pubs do a good range of non-alcoholic drinks these days), relatively easy to find a central location. From my point of view, if no-one turns up I can still sit quietly with a drink for a while, and I'm not risking a not insignificant part of my monthly income on venue hire. I also try & choose one which has some food options (some people may come straight from work) & caters for a range of dietary choices (gluten free & vegetarian I always check). In London suitable adjacent fast-food options are more common, but this breaks up the meeting as people leave to buy food.

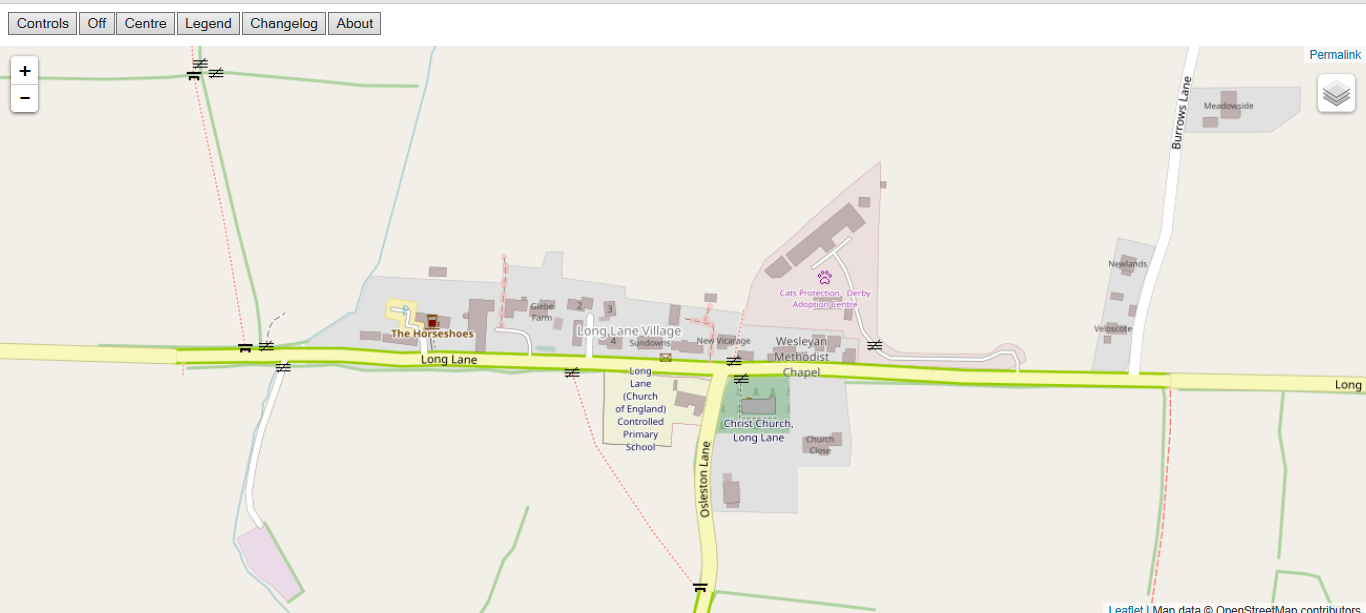

For location we chose somewhere central convenient for public transport (within 10 minutes walk of different options). This has been very useful as we now get people coming from further afield. For the past few years we've used other towns as well & this brings in different people.

Time & Date

For personal reasons weekend meetings were not possible for me: I do other things at weekends, caring responsibilities etc.. I suspect the same is true of most of our other attendees too. I do organise an Saturday meeting once or twice a year with the objective of mapping footpaths, where we share lunch together. The Norwich group have been successful with a Saturday morning meeting, followed by coffee or lunch.

I think it's generally true that people who map in OpenStreetMap may have a lot of other shared interests. Many of our group are keen walkers, cyclists, followers of folk music, owners of allotments, real ale enthusiasts, interested in historic buildings and so on. These shared interests do help a group to gel, but perhaps make it harder for newcomers to feel included.

Who we are

We are, for the most part, men with an IT/STEM background over 40 with perhaps more over 60 than under. The age range is not untypical of the OSM mappers in the UK, but in other countries typical contributors may be younger (France, Germany, Spain, much of Latin America to my knowledge). Our youngest member, turned 30 last year. She is a professional geographer, coming to our first meeting when she was a student, but pretty much shares the interests I list above. Our oldest members are in their late '60s or early '70s.

Also, fairly typically for OSM, the vast majority of us have degrees with perhaps half having a masters or doctorate. A very high proportion either run their own business or work for small companies, and quite a few of us are retired. Many worked from home long before the Covid pandemic. This may reflect the STEM-bias found across the board in OSM contributors, but may also represent a pattern of working which is more compatible with contributing to OSM.

From a personal viewpoint this has all been very rewarding, these people are good friends (in fact the monthly OSM meeting has become my most regular social gathering, because other ones fell by the wayside when people's caring responsibilities, including my own, grew too much. I think this also sustains the group.

I should add that a perhaps under-appreciated aspect of the group is how many have had some caring responsibilities: largely for elderly parents, but also grandchildren and one diabetic cat. Another related factor is that in the 10-year time-frame about half of us have lost one parent; and shockingly we lost a member, David Evans, when he was in his early 40s. This means some useful conversations have been about night-sitting care-workers or getting probate. The downside is, as I say, it may be harder for newcomers to feel able to join or contribute to: a) an existing group with its own dynamic; or b) a group which is different from oneself. I have no good answers to these issues, but perhaps having different forms of meeting may attract different groups.

Mapping Activities

During the Summer months (April-October) we provide for an hour of mapping activity before meeting (largely determined by how long I can make available beforehand). At the outset we did this together as it was a vehicle for explaining OSM to people. More recently we meet & go off & do our own thing, although I am always available to show someone the ropes if a newcomer turns up. Our pub locations are deliberately chosen to maximise the range of different urban landscapes within a short distance. For the past few years we have concentrated on updating shops in the city centre (which is not just good for explaining OSM to people, but also fits with a constrained mapping time, and for exploring what continuous maintenance of data entails). This is a good opportunity to show different editors (GoMap, Vespucci, Street Complete etc).

What we talk about

Once in the pub we probably focus the first 45-60 minutes to discussing mapping problems: particularly as we have someone who comes with a long list of such questions (see above for this month's issues). General gossip about events in the broader OSM community may also happen. After that our discussions become much more social. The format works well for someone wanting some specific answers as they can leave after the first hour.

Alternative types of Events

Things I haven't done, which might be worth considering:

- Formal talks (like Geomob in London), this could work well, but requires a suitable space, speakers & much more preparation. Other tech groups such as Linux User Groups use this approach.

- Half or one day workshops. Again more work (& possibly more than one person). Topics from mapping through to configuring a render server or using QGIS

- Missing Maps sessions or similar humanitarian mapping. These sessions appear to be very popular and can be held in small or large settings. They attract a different group of people (in London, more young people, more women & more GIS professionals). On the other hand I think there is a poor transference from mapping for humanitarian purposes to mapping locally (not so true the other way), so it might not build a local mapping community

- Interacting with local student groups. I've done a bit, but have meant to do more.

- Linking up with other like-minded orgs: Wikimedia, tech user groups etc.

One thing I would caution is that creating a group may not actually increase the number of mappers you have. We are pretty much the same set of active mappers as 10 years ago, although we have acquired one new active mapper in the past 3 years.

Starting out: recommendations for a local group

This is very much based on what I did. No doubt there are other ways of doing it.

- Decide on an initial meeting format, location & time well in advance. In the current circumstances that may well be a Zoom online meeting or similar. You need to use your judgement (& personal circumstances) to choose these. You may want to consider if these are suitable for a repeating event, as it's much easier to schedule follow-ups on that basis.

- Contact as many active mappers as you can (via OSM messaging, email). Contact local interest groups: walkers, cyclists, tech, wikimedia etc). You can canvas people for themes at this point which can set a structure for the meeting.

- Once you have basic interest & a time & place for a meeting, advertise it more widely (OSM mail, twitter, facebook .....). It's difficult to underestimate just how many different communication methods are used by those interested in OSM. Add it to OSMCal and then it will be included in weeklyOSM. Write an OSM Diary entry (& then we can write a news item about it in weeklyOSM).

- Have the meeting! Importantly, check at the end if people want to do it again, what frequency they feel is suitable (monthly, every 6 weeks & quarterly are good choices) & what things they would like to do (social/mapping etc).

- Write up what you did. I did this for the first few meetings, but it can me more of a burden later on.

- If all goes well, schedule meetings for the rest of the calendar year. It makes organising easier & helps diary management; and also helps attendees.

Personal Motivation

Lastly, think a bit about your own goals of trying to organise the community. I know we have woefully failed at my original one to get 10 highly active mappers in the city of Nottingham, on the other hand we do provide a continuous point-of-contact for local people to find out about OSM. Getting to know each other, what we are interested in, what & how we map have been immensely beneficial in terms of creating a more communal approach to mapping. It's often much easier to discuss how to map something face-to-face than by email & even better if you stand in front of it.

Whatever you do, only take on that which you feel comfortable with. It's quite a lot of work (at least initially) and there are lots of things it would be nice to do if one had the time, skills etc. OSM is built on 'good enough' not being perfect. Ideally, you might find people with complementary skills who might be interested in doing some of the other things.

People do run out of steam doing these things, and its worth recognising it. The one thing I did find extremely demotivating was the "Craftmappers" post by Michal Migurski back in 2016. For a few days I felt like throwing in the towel, and leaving the group to its own devices. I think for many of us it is important to avoid much of the mud-slinging which purports to be discussion in many OSM channels (for this reason I do not subscribe to many mailing lists), and focus on what works for us at a local level.

Local groups are not so common that they do not need a little bit of nurturing. I'd like the OSM Foundation and local chapters to consider this more seriously.