Stanage Edge, Derbyshire

Stanage Edge, DerbyshireThe site of hundreds of hyperlocal names used by rock climbers.

Photo courtesy of Earthwatcher BY-NC-ND

We had an interesting a discussion in March on the OpenStreetMap

talk-gb mailing list arising out of some controversial naming practices in Loch Lomond and Trossachs National Park ("

Giro Bay"). Someone suggested that no-one would or should ever add newly minted names on OSM.

This is an extended version of why I think that names widely recognised in specialist communities should be used when appropriate. I call these names "hyperlocal", because many of them are used for naming features or localities which non-members of the relevant community would not recognise, or would see no need for naming them.

Naturalists. This whole post started because someone expressed surprise that 'non-official' names might be entered in OpenStreetMap (all my examples are from

Attenborough Nature Reserve, Nottinghamshire). Naturalists, and particularly those managing and using nature reserves, are creating new names all the time. In part this is because often these places themselves are new (for instance old industrial workings and quarries — "The Delta", "The Bund"), but mainly because much greater precision is required for location information. This is really important if one wants to find the only example of a rare plant, or check on a bird-box location, or photograph a rare fungus, or make sure the right tree gets chopped down. Also one becomes increasingly aware of minor differences which dramatically affect the range of plants and animals over a small area. In principle this could be communicated by using grid references and at a fine scale a GPS. In practice names are more memorable, help to delineate areas which grids don't, and are much easier to communicate. Some names stick, others may be concocted but never enter common usage. Some places have multiple names, and it takes a long time to find a consensus ("Old Car Park", "Fishermans Car Park", "Education Meadow", "Corbett's Meadow"). Many names have multiple intelligible variants ("Butterflies", "Butterfly Triangle", "Butterfly Patch"). In other cases it's possible to see how a name changes. For several years

charcoal has been made at Attenborough: the area by the kiln is now nearly always referred to as "by the Kiln", whereas it used to be called "Redwings". In other places locations are known by number alone, for instance 'compartment 73'. I have a map of compartment numbers for

Clumber Park, but it's an internal National Trust document, they are used in the national Fungal Records database

though.

I have added several of these 'made-up' names which are in common usage around Attenborough Nature Reserve: the

Delta,

Warbler Dell,

Dirty Island Bank,

Butterfly Patch (in use since the 1960's),

Corbett's Meadow (a recent 'official' coinage, in memory of Keith Corbett who was reserve manager for over 30 years until his death in 2007), which is also known locally as "The Fisherman's Car Park", and "The Old Car Park", and

Education Wood (a recent unofficial coinage, around 2005). As all the water bodies were created by gravel working, their names have evolved recently too. I have only added those which are in widespread usage: there are perhaps 50-odd names which were coined in the '60s and '70s, mostly eponymic toponyms, but many never caught on.

Carsington Reservoir is another location where local birders have evolved a significant number of toponyms. Any of the Helm "Where to Watch Birds" regional guides will provide many more examples.

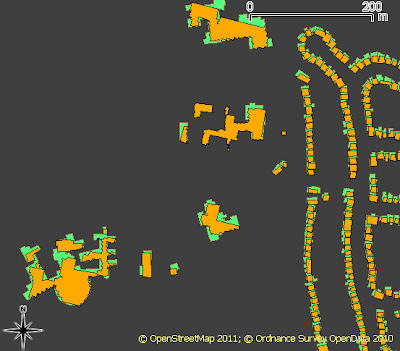

Climbers. Hill and mountain areas are chock full of named features which might never be noticed. This isn't surprising, the cliff shown at the top of this post

Stanage Edge has 100s of climbs along its length. The convention is that the first people to successfully climb a route have naming rights, but it's more complicated than that. An even smaller crag further S in Derbyshire,

Birchen Edge, has a convention that climbs names should have a nautical allusion (Trafalgar Wall, Camperdown Crawl). I would think in Derbyshire alone there are probably well over 5000 named climbing routes. Many of these will be less than 20 m ascent. On the harder ones each hold will be memorable (I'm not yet aware of these being named yet). On the other hand some places fall into disuse. My father and his friends used to climb at

Laddow Rocks because it was accessible by bus from the Manchester area. Much of Laddow is now mossy and green, but everything on it still has a name: several guidebooks were published.

Oddly, in bigger mountains such as the Alps there is not this degree of minutiae in naming: or if it exists it's not published so widely. A number of factors influence this. Climbing in the Alps tends to follow obvious lines because there are a lot more of them, and they're much longer. Sport climbing has used pictorial 'topos' for a long time, so they avoid the same need for names as a narrative descriptive system. There are still plenty of these names though, the

Eiger Nordwand has lots, all used in many mountaineering books, and giving a title to one: Hinterstossier Traverse,

The White Spider, the Third Ice Field, Traverse of the Gods, Exit Cracks and so on. That is just one face of one mountain, albeit a famous one, and there's enough variety in the type of names to give a feel for how they were created.

Skiers are another group who go into the mountains for recreation. In many ski resorts many of the names are invented and often follow a stereotyped pattern typically with lots of alpine fauna: White Hares, Pika, Marmots, Chamois, Ibex, Ptarmigan. The older European resorts still use lots of names which arose naturally from older uses.

St Anton in the Tirol has many ski runs and locations named after alpine meadows. Some of these, such as

Gampen (often Gampli) have an interesting linguistic history indicating the presence of speakers of a latin-derived language in the area similar to modern Romansch.

Mattun, is another name ultimately derived from latin, referring to a characterstic member of the mountain vegetation. Both of these are fairly common place name elements close by in the Eastern Graubünden. (Place name etymology from the

Swiss Alpine Club guides). In addition to the regular runs, St. Anton has a host of well-known, named, off-piste runs. The photo shows the top of a broad but steep gully off the Schindlerspitze: but, I never learnt the names of these gullies. Some of the seriously steep chutes have names which are closely guarded secrets passed on only to those who have skiied them.

On the Grands Montets many apparently non-obvious features have names (often different names in different languages): Combe des Amethystes or the Italian Bowl; Canadian Bowl. Again these are not to be found on maps, but are widely used by people who know the area. One or two

specialised publications use them too. It's possible to ski a line a few metres from the Italian Bowl and not be aware that its there: even then finding the direct entry which gives an exhilarating steep start requires precise knowledge of the local geography. So once again, these names have arisen from a need for much greater precision than might otherwise be expected.

Ski racing requires a heightened awareness of terrain which again tends to drive increasing precision in naming. One of the oldest open downhill races in the Alps is the

Parsenn Derby. When I first visited Davos the piste which formed the lower part of the course had markers giving the names of each turn and schuss. nowadays the course is shorter still, but still features places like the 'S-bends' and the 'Derby Schuss'. It's not for wimps like me:

Motor Racing. Any one who has followed a Grand Prix will know that every part of the track has a name. Of course on a modern track these names will have been invented and assigned by marketeers, but on courses with a long history, like Silverstone, it's easy to guess how the names came about. Early users probably said things like 'I came off at the corner near Stowe', which being cumbersome would get rapidly shortened to Stowe Corner. Of course names were useful to everyone: drivers, instructors, marshals, commentators and spectators. Now they integral to what people expect of a motor racing track. Many of these are

captured on

OSM, including the

circuit featured on

Top Gear.

Fishing.

Fishing. I know little about fishing, but it struck me that fishermen must have names for favourite spots, and then I came across the wonderful annotations of Walking Papers by

Kirk Lombard. The one I show above is near

Fishermans Wharf, San Francisco, with one fisherman's name "Striped Perch Hole". Incidentally, this seems to be a great example of using Walking Papers (and OSM) in an innovative and unexpected way.

Names seem to evolve in each of this situations in similar ways. Often they are created by pragmatic shortening of a description. Memorable events, and the people associated with them, are another rich source of names. Things also get named after people as a token of appreciation, respect or a with to remember them. Thematic naming sometimes works, but often ends up being insufferably twee, and is often resisted or subverted, particularly by wit.

One last one example are local names to the swimming holes on Fairham Brook by Keyworth Meadow NR: see Neil Pinder's

article on the parish website. I've asked around for other examples, and someone with extensive experience of forestry noted that workers named landscape features in a very similar way to naturalists I'd be very interested to hear of other examples, particularly outside the interest domains I've covered in this post.

The ability to map these hyper-local toponyms is a very attractive part of OSM. Of course, they need to be researched accurately to ensure they are names which are used rather than 'book-names'.